Worst Mistakes to Avoid in Conversion Rate Optimisation

Conversion rate optimisation is all the rage these days. Experienced online marketers now agree that “CRO is as important as SEO,” meaning that if you’re not testing, you’re leaving money on the table.

And yet, with more people entering into the field, it’s more common to see the same testing mistakes over and over. While some these are not unique to CRO, many basic lessons can be learned before you dive head first into an ocean of AB testing.

Here are my top four mistakes to avoid with CRO testing:

1. Imaginary Lift

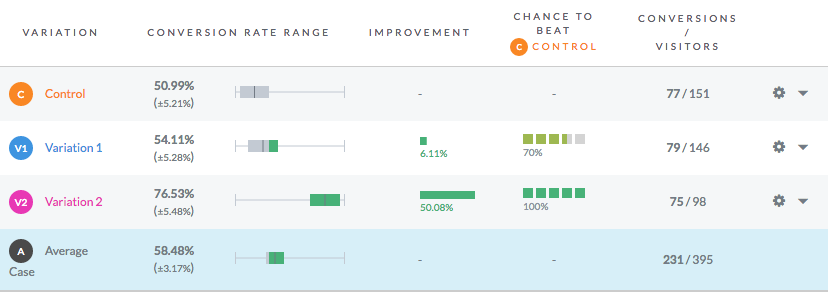

When performing a test, Optimizely and VWO show you graphs which make it easy to view the results and the statistical significance achieved. Here’s an example:

In this case, variation 2 shows a 50% improvement at a whopping 100% confidence interval. Nice result! This means that you can go back to your client and tell them you have improved conversion rate by 50% … right?

No!

Just because a test shows a 50% improvement in conversion rate does not mean that conversions have actually improved by 50%. The only thing that you know for certain at this point is that the second variation is converting at a higher rate than the original, but you do not know exactly by how much until you have additional data.

Let me repeat this again – just because you measured a 50% lift doesn’t mean conversions will improve by 50%. Usually, the conversion improvement is much less; in practice, getting only 50-60% of the measured lift is common. This is simply due to the fact that website performance tends to regress to the mean. The longer that you run the test, the less difference you will measure.

When you are performing your AB tests, it’s critical that you allow at least 4 weeks for tests to run in order to gain enough insight. You should also preferably have 100 or more conversions in each group. Now sometimes you will need to implement winning variations due to client demands, or you may have enough of a difference that you feel confident you’ve exceeded the initial goal (as in this case), but generally speaking, the more data and time you give the tests, the better your results are going to be.

2. Hacking, not Strategizing

Many of the same people who are involved in the tech “startup” scene are also people who would identify themselves as “growth hackers.” I love hacking as much as anything else – even this site and concept was created through lots of trial and error – but CRO is not the same!

To get the best results with conversion rate optimisation, you need to have a strategy and cannot simply test through trial and error. The process usually looks like this:

- Gather Insight: Review the Google Analytics account to identify areas of drop off. Review competitors to identify areas where you can make yourself more competitive.

- Test Hypotheses: Queue up about 2-4 tests that you generate based on the insight. Measure each for 1-4 weeks.

- Rinse, wash, repeat: Look at the data, and come up with a few more ideas.

Many amateur testers will simply come up with ideas at random – “What if we changed the products on the homepage?” – rather than have a long-term testing strategy. When you do this, you end up with a lot of random tests at random times, and minimize the total amount of conversion lift.

3. Statistical Significance and AA Testing

Just because a test reaches statistical significance doesn’t mean that it’s valid.

One of the things that may surprise new CRO professionals is the importance of “AA” testing. An “AA” test simply compares the conversion rate of two website versions, and ensures that your test software is setup correctly. If your testing software shows a different conversion rate, or a statistically significant result between the two site versions, something is clearly wrong with your test setup. While it may not sound common, you would be very surprised at how frequently the javascript used by VWO and Optimizely can interfere with websites.

The other issue that many people miss is that statistical significance, by definition, assumes that the website data is normally distributed, which is not always true. In fact, a normal distribution is somewhat naive to assume for most websites. Smaller sites have no other option but to use a standard distribution, but for sites with enough data, it’s worth trying to suss out their true distribution curves to test against rather than make a blanket assumption.

4. Expecting Small Changes to Bring Big Impact

Changing a button color will NOT improve your conversion rate by 50%!

The web is littered with case studies of clients claiming to double conversion rate with minor changes. This nearly never happens. In practice, only large, drastic changes to your website will lead to huge conversion improvements, or a combination of smaller tests.

Many people assume that changing the headline on a website, or changing the CTA, will be enough to get a 20% improvement in conversion. Usually though, these types of changes will yield a 5-10% improvement at best. To get a 20% or more lift, you’ve got to do some major redesign work, or change something else about the page.

Some of the largest improvements that we’ve gotten clients have been through:

- Shipping Offers: Giving Free Shipping to customers is a HUGE change that will lead to massive conversion improvements, usually 20% or more. You also need to check that profitability improves accordingly, but we’ve shown ways you can make it work.

- Drastic Choice Reduction: Reducing the amount of CTAs by 30-50% will improve conversion as much as 25% on e-commerce sites.

- Positioning and Competitive Advantage: Strong USPs and strong pricing/offers compared to competitors are often needed.

Some case studies have been through making a series of small changes – such as minor checkout changes, minor homepage changes – but almost all of the big wins we’ve gotten from clients have been from major changes, not just small tests.

Summary

Don’t expect CRO – or anything else in life! – to come immediately and easily. It takes hard work! Create a strategy, do your due diligence in test hypotheses, make large changes, and be sure that you are measuring correctly. With all these in place, you can be on track to achieve large gains in conversion that so many site owners have enjoyed.